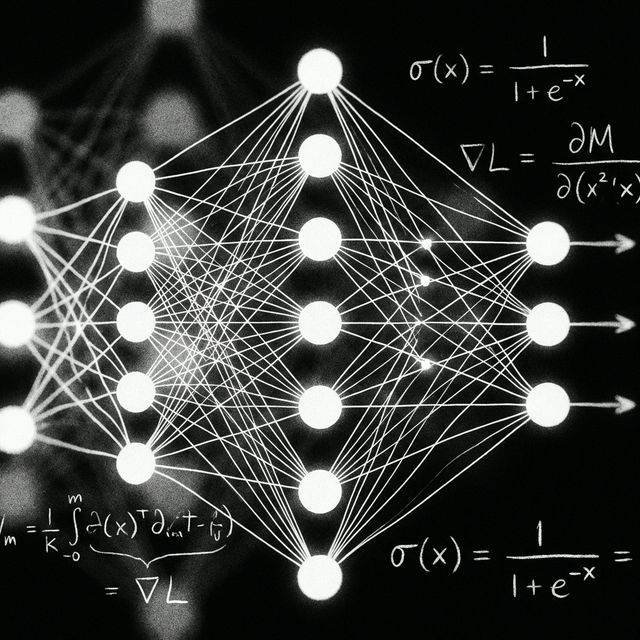

Neural Network

Evaluating Open-Weight Models

Evaluating LLMs, image synthesis architectures, and high-dimensional vector embeddings on local GPU hardware. Ensuring total data sovereignty for security research while benchmarking multi-modal inference.

Evaluating local models.

The neural infrastructure in the Buildations lab provides a controlled environment for testing and evaluating a variety of open-weight models. By running LLMs, image synthesis, and embedding models on distinct hardware profiles, we study inference efficiency and data sovereignty implications for autonomous defense systems.

Key References

Touvron, H., et al. (2023). "LLaMA 2: Open Foundation and Fine-Tuned Chat Models." arXiv preprint arXiv:2307.09288.

Local inference pipeline.

All AI workloads run on local GPU hardware — no cloud APIs, no data leaving the network. Ollama serves LLM inference, Qdrant stores embeddings for RAG, and ComfyUI handles image generation through Stable Diffusion.

LLaMA 3.2

Advanced language understanding with GPU-accelerated inference. Conversational AI, code generation, and analysis.

Stable Diffusion XL

High-quality image synthesis from text prompts via ComfyUI node workflows with custom checkpoints.

Whisper Large

Multilingual speech recognition and translation. Local processing with no audio data leaving the network.

CodeLlama

Specialized code understanding, generation, and debugging across multiple programming languages.

CLIP

Image classification and semantic search. Powers visual similarity queries in the vector database.

Embeddings

Semantic similarity and RAG pipeline. Documents are chunked, embedded, and stored in Qdrant for context retrieval.

Adaptive security ML.

The neural network doesn't just serve chatbots — it powers the adaptive defense engine. Security logs from honeypots and IDS are processed by ML models that learn to identify new attack patterns, creating a self-improving security posture.